Per-Token Down. Per-Task Up.

The unit of AI economics is breaking. A new vocabulary is filling the gap.

Per-token cost is down roughly thirtyfold since GPT-4 launched. Enterprise GenAI spend tripled in 2025.

Same window. Opposite directions.

The vendor is correct. The CFO is correct. They are reading different units off the same invoice — and the meter that connects them, the token, is dissolving in real time.

For thirty months we’ve inherited a unit of measure from the cloud era and applied it to a technology that breaks the assumption underneath it. In the cloud, compute consumption was a reasonable proxy for work done: run more queries, get more answers, monotonic and well-behaved. AI breaks that proxy. A token tells you nothing about whether the task succeeded. A GPU-hour tells you nothing about whether the agent helped or hindered. The new pricing decks are still pretending it didn’t.

The Cloud Made the Token Look Like Money

When we wrote our first procurement agreements for AI, we copied the cloud playbook because it was the only one we had. AWS bills you for compute; OpenAI bills you for tokens; both feel like utilities, and both go on a finance dashboard the same way. The meter is sticky for the same reason kilowatt-hours are sticky: the unit travels well across vendors, contracts, and procurement teams.

The problem is that electricity is a fungible commodity whose value is invariant across uses. A token isn’t. Two thousand reasoning tokens that solve a real customer problem are not equivalent to two thousand that confidently produce a wrong answer. The unit collapses the difference between work and motion.

People optimize the meter while the mission goes off the rails.

Per-Token Down. Per-Task Up.

The inversion is not a forecast. It is what the dashboards are showing right now. The list price tells one story. The invoice tells another. Three mechanisms drive the divergence, and they compound.

Reasoning inflation. Reasoning models consume ten to fifty times more tokens thinking through an answer than producing it, billed at the output rate. A query that costs four-tenths of a cent on a chat-mode model can cost dollars on a reasoning model, not because the price per token jumped but because the token count did.

Agent fanout. A single user prompt becomes ten, twenty, a hundred model calls as the system decomposes the task, retrieves context, calls tools, retries, and self-corrects. Replit Agent 3 launched in September, and within weeks The Register was documenting users reporting bills jumping from low hundreds per month to hundreds or even $1,000 in days, as Agent 3 performed more work under the hood.

Pricing translation failure. Flat subscriptions, per-seat licenses, and per-request units stopped mapping to what the vendor actually pays in compute. Cursor moved to usage-based credits in June 2025; users who’d been paying $100 a month started seeing $20 to $30 a day on identical workflows, and the CEO eventually issued a public apology. Anthropic shipped Opus 4.7 with a new tokenizer that, as Simon Willison documented, can map identical text to materially more tokens. The list price didn’t change. The effective price did.

GitHub’s CPO, Mario Rodriguez, named it cleanly when GitHub announced in April that Copilot would move to usage-based billing starting June 1: “a quick chat question in a multi-hour autonomous coding session can cost the user the same amount.” GitHub had been absorbing the difference for months. The current model was, in his words, no longer sustainable.

Per-token cost has fallen roughly thirtyfold since GPT-4. On the same task classes, the effective cost per completed task can rise by multiples even as the public per-token rate falls. The CFO who optimized the API line on a 2024 plan is staring at an invoice that is not arithmetically reconcilable with the press release announcing the price drop.

The failure mode is simple enough to name without jargon. The agent ran. It didn’t do the thing you asked. The per-token meter doesn’t know the difference.

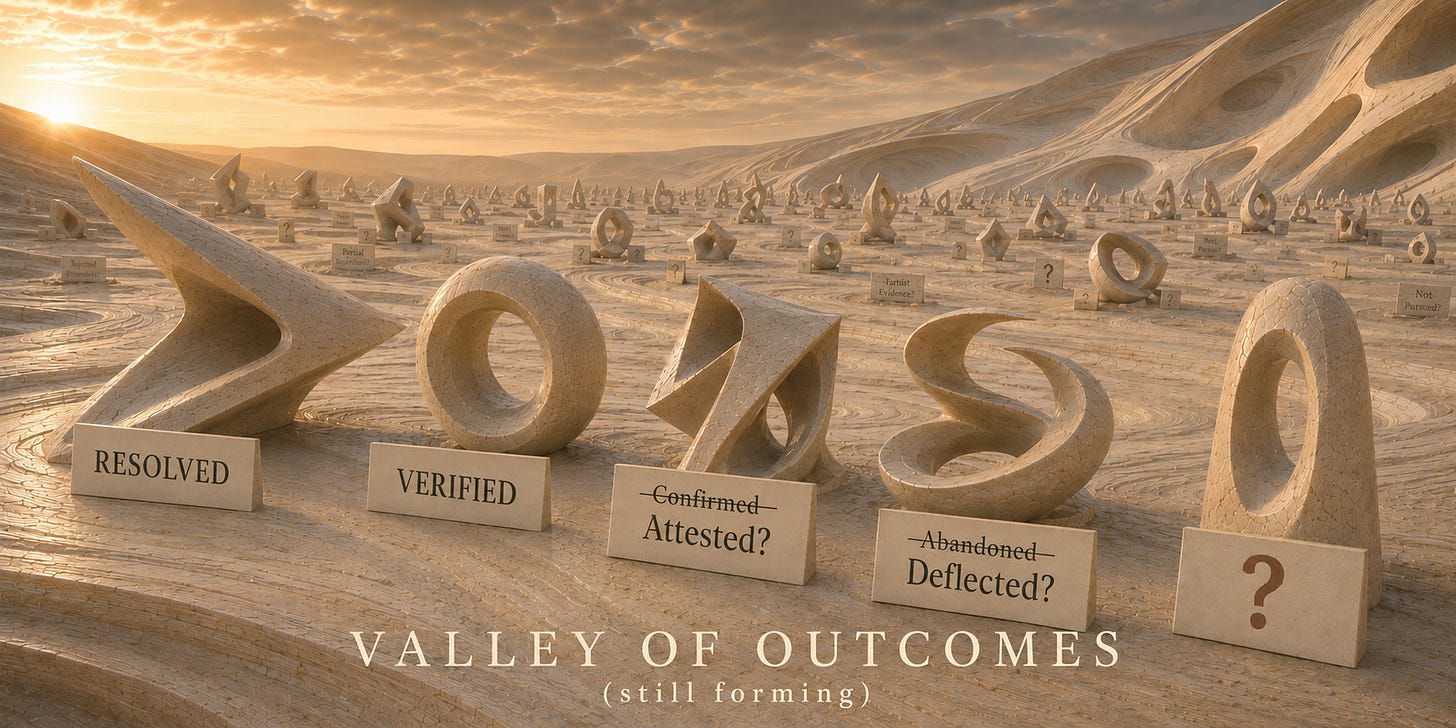

The New Units Are Forming. Nobody Has Standardized Them.

The replacement unit is not theoretical. It has been forming for two years inside the practitioner vocabulary: cost-per-resolved-task, cost-per-attested-decision, cost-per-verified-outcome. These are not slogans. They are the metrics enterprises wish their procurement teams were measuring.

The bench of vendors already pricing in these units is broader than most enterprise buyers realize. Bret Taylor’s Sierra has reportedly crossed $100M ARR on pure outcome pricing: only paid when the agent resolves the issue, saves the cancellation, or completes the upsell. Taylor’s framing on the Cheeky Pint podcast: “The whole market is going to go towards agents and towards outcomes-based pricing… It’s just so obviously the correct way to build and sell software.” Zendesk became the first major incumbent SaaS vendor to migrate off seats, pricing Automated Resolutions at $1.50 each. ServiceNow restructured Now Assist into outcome tiers in April, with the leadership messaging that customers aren’t paying for tokens but for resolved outcomes. Crescendo and other CX vendors are pushing toward per-resolution pricing, with quality and CSAT entering the pricing conversation. It’s the sharpest verifier move available, because it grades the agent the way the customer would.

The Aider leaderboard is the existence proof on the technical side. It publishes pass-rate and total dollar cost across every major coding agent on a public benchmark, updated continuously. Cost-per-resolved-issue falls out directly. Look at it once and the per-token framing stops being legible. Two stacks with identical per-token cost can have a five-fold difference in cost-per-resolved-issue, because one of them solves the problem and the other one burns tokens politely failing.

Code is the easy case. It has a built-in oracle: the test suite either passes or it doesn’t. Customer support, decision support, and report generation don’t have a test suite waiting in the repo, and that is the harder problem. Building an LLM-as-judge for “did this support ticket actually get resolved” has its own well-known failure modes: judges drift, share biases with the agents they grade, and can be gamed by the same patterns. And attribution is its own problem: when a ticket gets touched by a deflection bot, a routing layer, a human agent, and a follow-up automation, deciding whose outcome to score is sometimes harder than building the verifier.

Intercom’s Fin AI prices per resolution but counts customers who exit without escalating as “assumed resolutions,” a definitional shortcut that, by the time it reaches the audit committee, will not look like an outcome metric. Useful, but not a benchmark. The cross-vendor data exists in observability platforms. It is not published. That is a real gap, and it is also a real opportunity.

The Outcome Meter Used to Be Too Expensive

The objection writes itself. Outcome metrics have been the holy grail since Wanamaker complained that half his advertising spend was wasted and he didn’t know which half. That is why we use input proxies. Cost-per-token is legible. Cost-per-attested-decision is squishy. The argument from intractability has been right before: about employee productivity, about advertising spend, about consultant ROI. Every era has tried to replace the input meter with the outcome meter and most have failed.

Half right.

The mistake is assuming this era has the same constraints as the last one. The reason cost-per-outcome was impossible to measure before is that the outcome had to be assessed by humans, sampled, reported up a chain, and reconciled. Each step introduced friction that exceeded the value of the measurement. AI removes a lot of that friction, even where it doesn’t remove all of it. Aider doesn’t ask a human reviewer whether the issue was resolved; it runs the test suite. The same logic is reachable in customer-facing work: a CSAT score the customer left, a chargeback ruling from the card network, a contract closed without dispute. The verifier is the same kind of thing as the producer, and where it works, it scales the same way the producer does.

Outcome measurement was never impossible. It was uneconomic. That constraint is bending fast.

The Meter Is the Argument Now

Once you accept the input meter is wrong, the strategic question becomes: who decides what the right one is.

Vendors have an obvious incentive to keep the per-token meter running, because it indexes on what they sell. Customers have an obvious incentive to migrate to outcome units, because it indexes on what they buy. Between them sit the analysts, the consultants, the procurement frameworks, all built on input-side TCO calculators that no longer reflect what is being purchased.

Even the vendors are conceding the unit is moving. Satya Nadella, on Microsoft’s Q3 FY26 earnings call: “Any per user business of ours, whether it’s productivity or coding or security, will become a per user and usage business.” The company that perfected the per-seat SaaS model is now saying the next model is per-user plus usage. Anthropic cut off third-party tools running through its $200/month Claude Max subscription in April after some customers were burning through five thousand dollars of compute on the plan. The same June Copilot repricing pushed the Claude Opus 4.7 multiplier from 7.5x to 27x, a four-times effective price increase that was overdue but not announced until it was unavoidable.

The first wave of enterprise AI economics was a vendor-defined regime. The second will be defined by whoever publishes the first credible benchmark and gets it adopted. Stanford HAI put it plainly: the era of AI evangelism is giving way to an era of AI evaluation. Whoever builds the evaluation framework sets the terms.

The opportunity is hiding in the data void. Observability platforms have the cost-per-outcome telemetry; none of them have published it cross-vendor. The Aider leaderboard is a model nobody has copied for the workloads where the spend is concentrated. The benchmarks for those categories are sitting unbuilt, and they are the artifacts that will retire the per-token meter when they ship. The governance layer underneath — chargeback attribution, routing policy, the AI FinOps function Fred Brown describes — is the organizational answer to who actually owns the new unit once it exists.

If the substrate eventually shifts to world models or some other non-LLM architecture, the unit will shift again. That doesn’t rescue the token.

The Meter Is Not the Mission

Per-token cost will keep falling. DeepSeek will keep dropping the floor. The vendor headlines will keep saying deflation. None of that is the interesting question anymore.

The interesting question is who sets the next unit. Vendors have every reason to drag this out: the existing meter is what they sell. The enterprise that moves first on instrumentation doesn’t wait for the standard to form; it shapes it. The first firm to publish a credible cost-per-outcome benchmark for customer support, for analyst workflows, for board-level decision support owns the framing for a cycle. That is not a research project. It is a competitive position.

The thing to do is not negotiate cheaper tokens. It is to instrument the workflow before the benchmark exists: task attempted, model path, total inference cost, verification result, human rework, business outcome. The firms building that layer now will have the data when the standard forms. The firms waiting for the standard to form first will be reading someone else’s definition of done.

Same window. The vendor headline says costs are falling. The enterprise that built the outcome layer knows what it’s actually buying — and what it isn’t.

The token had a good run. It is not what we are buying anymore.